AI-Based Coding and Alienation

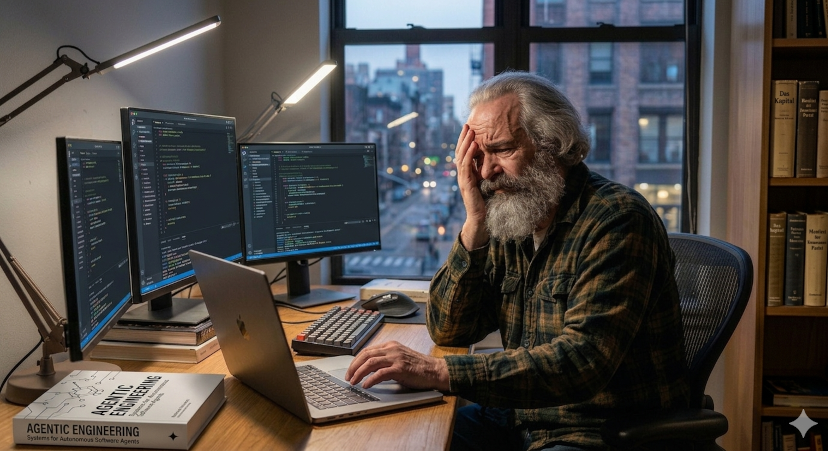

Karl, senior developer und open source contributor, feels like a stranger in his own codebase

Recently, while asking myself why programming with AI support feels so unsatisfying to me, the term alienation came to my mind.

Specifically, I am referring to the theory of alienation by Karl Marx (for those interested: described here in the original ).

As soon as I had this thought, I was already tempted to discard it again. Because obviously Marx had something else in mind which is not related to working with code - be it with or without AI.

Even at first glance it is obvious that one of the central conditions of Marx’s theory is invalid when it comes to coding: there can be no talk of the worker (here: the programmer) having no direct access to, no control over the means of production (here: a PC/Laptop and access to the internet).

The exact opposite seems to be true: actually even a teenager with a passion for programming can start their own business, e.g. as a web developer and earn good money this way, without noteworthy financial savings, without professional training, and without an employer.

At second glance however, especially when we are talking about programming with AI support… was the association not as far fetched as it seemed?

I would like to establish two premises for the following:

- This is not a Marxist analysis.

I neither want to prove nor refute the applicability of the Marxist theory of alienation to the tech industry.

The aspects of alienated labor which are described by Karl Marx merely serve as a template here, a starting point, from which I can further elaborate my thoughts.

- This is about working on personal projects.

Be it as a hobby, as a contribution to an open source project, or with commercial intent. Including work done for your own startup.

But I am not talking about being an employee in a software development job who gets to enjoy the daily pleasures of endless bugfixes, of maintaining outdated spaghetti code, or of working on projects of highly questionable value and purpose.

In such cases, which constitute the daily work routine of many programmers, a proud identification with the code produced at the job will not happen anyway. With or without AI support.

Alienation from your own program

Marx describes four aspects of alienation. The first is the alienation of the worker from the product of his labor.

Although he took part in its production the final product does not belong to him, appears foreign to him, and even gives others power and dominion over him.

When I build a project with AI support, the feeling of estrangement from the final product is very pronounced for me and gave birth to these reflections.

Can you really claim ownership over something that you merely described and initiated but which for the most part has been generated by a large language model (LLM)?

If I stand beside an artist in their studio and tell them what to paint for me, am I the artist, the creator of the painting? Most certainly not.

Then why would it be any different for code, a program, software?

Also, precisely the claim that the final product which is alien to us gives others power over us deserves to be examined in the case of AI-based programming.

The possibility to have AI agents do the work for you is often described as “empowering” programmers.

Now they are no longer required to possess a lot of experience or specialized knowledge, nor do they have to be particularly well-versed in the programming language and tools of choice for a project. In fact, they do not even necessarily need to have any knowledge in programming in order to create impressive and complex applications and earn money with them.

However, this comes at a cost.

For one thing, someone who got used to having the majority of their code generated by Claude & Co. will very soon lose overview and comprehension of their codebase.

Secondly, paid subscriptions are required for intensive usage of AI agents and the prices are not likely to go down over the course of the years (with the cost/damage to nature being a separate topic in its own right).

And lastly: making yourself dependent on ChatGPT, Claude, Cursor and their likes means making yourself dependent on nonfree proprietary software. And thus dependent on the mercy of third parties.

So will those who are strongly leaning towards AI agents for writing most of their code still be able to maintain their existing codebases which were generated by AI? Will they be able to expand them and implement new features or projects on their own, in case the subscription prices for the most powerful LLMs have become too steep or in case the big AI monopolists will restrict access? That is highly doubtful.

Ultimately, it is not the respective programmer who gets empowered by increased usage of AI agents while losing money, skills, controll, autonomy, and in the end ownership of their code.

Who gets empowered are solely Scam Altman, the evil demon Asmodei and the likes, whom we grant power over us by making ourselves dependent on their products in all aforementioned aspects.

(Short aside in regards to the puns: to those with a penchant for outrageous and quaint conspiracy theories I warmly recommend this article . Seriously, this is a real treat for the inclined man of culture!)

Alienation from programming

But according to Marx the worker does not only feel alienated from the product of his labor but already from the process of working/production.

His work feels like an outside force, is no part of him and leads to a sense of unhappiness caused by the inability to realize his potential. His skills and talents are not challenged and developed but lie idle.

This too sounds familiar if you let AI agents write the majority of your code.

A programmer relying on AI agents is largely no longer challenged himself but becomes a sort of babysitter for the coding AI.

Some outright celebrate this and declare that manual writing (and reading) of code will soon be a thing of the past.

Yet “use it or lose it” also applies to programming knowledge. And intensive usage of LLMs for programming leads to noticeable loss or at least decrease of your own skills.

This phenomenon has become a topic among programmers even now, so soon after the emergence of (somewhat) capable AI coding agents. What will this be like after several years of “vibe coding” and “agentic engineering”?

Additionally, feelings of joy, of being intellectually stimulated in order to find solutions, a sense of learning and improving, of achieving something and of creating - none of that is happening when spending hours of discussing their output with LLMs.

The joy in programming, in learning, in puzzling is replaced by “efficient production” and feelings of dependency, powerlessness and eventually maybe even uselessness.

Alienation from the programmer-species-being

When Marx talks about the species-being of man he means that which is common among all humans, inherited upon birth, what constitutes being human. So at the end of the day if we leave the terminological games aside, this does refer to a fixed, timeless human nature whose existence is denied by Marxism.

Be that as it may, Marx also observes an alienation of the worker from the species-being of man/human nature.

In our case, Marx’s observation can be applied to an alienation of the programmer from “programmer nature”.

Sometimes we can read and hear that the correct job description for software engineers should rather be “problem solver” (which, by the way, I think is quite silly).

Whatever we think about this, undeniably the solving of problems, the transfer of ideas into code, the writing of, tinkering with and optimizing of code is something which the majority of programmers enjoy. We could say: what defines their activity, what is part of their programmer nature.

But can you really still call yourself a programmer if your mainly lead discussions with LLMs for hours on end and are less and less capable of writing code on your own?

Of course this way we still see a transfer of ideas into code, optimization of code, and the solving of problems (ideally). But the programmer descends from the position of being the active force and instead becomes more and more of a passive supervisor and instructor.

And as of yet certainly nobody has claimed that this is what defines the nature of being a programmer.

Alienation from other programmers

As a final aspect of alienation Marx names the alienation of man from his fellow human beings.

Indeed, in coding with AI agents we can observe a tendency to view interaction with others as a waste of time: why should we have long discussions, consider different solutions, and seek contact with potentially “problematic personalities” if AI can spit out working code in the blink of an eye?

Now of course we can discuss if it really is such a big loss that we no longer have to rely on the “friendly” and “welcoming” community at Stack Overflow when looking for an answer to our coding questions.

Nevertheless, there, elsewhere, and especially in personal contact you always had the chance to learn new things and discover new approaches through discussion with others. Blindly copying someone else’s code without any further interest or understanding of their own was always the hallmark of a bad programmer and could lead to nasty surprises.

In theory there is of course the option of critically analyzing the AI’s output and asking the AI for subsequent explanations. In practice however it usually boils down to: why bother as long as it does what it is supposed to do. That the quality of this code is quite questionable and will cause problems sooner or later seems to be a matter of no particular importance.

One can’t help but notice that with the most ardent adepts of “agentic engineering” there is no longer much of a desire to increase knowledge and to safeguard code quality: the formerly shunned approach “Just copy and paste something together, who cares why and how it works as long as it works” is nowadays becoming popular. And not only at the corporate workplace.

You gotta go fast, Sanic! Things have to move quickly, ideally results should be available right away, and other people with other opinions and their annoying questions and objections just get in the way.

A sense of belonging to a community or the frequently invoked “vibrant community” will hardly arise this way if other people are perceived as inefficient interferences or even outright competition.

The result is social and economic pressure to utilize AI for coding and to be more efficient than the others instead of working and building together and learning from each other.

Concluding observations

This brings us to the response to a popular objection: “Then why don’t you just stop using AI! Nobody is forcing you in case you prefer to be old-fashioned and manually write all your code!”

Ah, but actually we are being forced, as I have just outlined above. Not only at the job it becomes increasingly difficult to explain to your boss that you would prefer to spend a few hours on doing research, thinking, reading documentation, and trying out different approaches in order to solve a problem (which you then genuinely understand) instead of simply asking AI to spit out some ready-made code.

In private too, even with pure hobby projects, there is always a feeling that by completely (or largely) avoiding to use AI we are working very inefficiently and wasting a lot of time which could have been put to productive use elsewhere.

The price that we then pay for “efficient AI usage” is the alienation in all aspects, as described. Pride and joy in what we do, being creative, pursuing our passion, and improving ourselves is increasingly sacrificed on the altar of “efficiency”.

What has often been inevitable in the workplace is now seeping into our leisure activities and hobbies too, thanks to AI. Our passions are being tainted.

Naturally this is not only true for programming but can be applied to other professional fields and hobbies as well: artists, authors, and so on.

Is there a solution to this problem? I am afraid the answer is: no. Even if there is, I for one cannot see it.

A general, large-scale reversal of course and change of mind that leads to a mindful and critical usage of AI, be it in programming or elsewhere, appears to me to be unrealistic wishful thinking.

History shows that ideas, developments, processes do not disappear any more once they have entered this world. Instead, they often continue to march on.

Even if it is obvious to everyone that they are negative developments.